Probability distribution

From Wikipedia, the free encyclopedia

| This article relies largely or entirely upon a single source. Please help improve this article by introducing appropriate citations of additional sources. (November 2008) |

In probability theory and statistics, a probability distribution identifies either the probability of each value of an unidentified random variable (when the variable is discrete), or the probability of the value falling within a particular interval (when the variable is continuous).[1] The probability distribution describes the range of possible values that a random variable can attain and the probability that the value of the random variable is within any (measurable) subset of that range.

When the random variable takes values in the set of real numbers, the probability distribution is completely described by the cumulative distribution function, whose value at each real x is the probability that the random variable is smaller than or equal to x.

The concept of the probability distribution and the random variables which they describe underlies the mathematical discipline of probability theory, and the science of statistics. There is spread or variability in almost any value that can be measured in a population (e.g. height of people, durability of a metal, etc.); almost all measurements are made with some intrinsic error; in physics many processes are described probabilistically, from the kinetic properties of gases to the quantum mechanical description of fundamental particles. For these and many other reasons, simple numbers are often inadequate for describing a quantity, while probability distributions are often more appropriate.

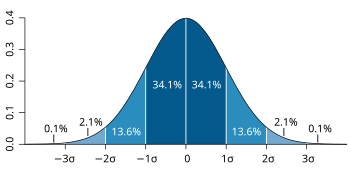

There are various probability distributions that show up in various different applications. One of the more important ones is the normal distribution, which is also known as the Gaussian distribution or the bell curve and approximates many different naturally occurring distributions. The toss of a fair coin yields another familiar distribution, where the possible values are heads or tails, each with probability 1/2.

Contents |

[edit] Rigorous definitions

In probability theory, every random variable may be attributed to a function defined on a state space equipped with a probability distribution that assigns a probability to every subset (more precisely every measurable subset) of its state space in such a way that the probability axioms are satisfied. That is, probability distributions are probability measures defined over a state space instead of the sample space. A random variable then defines a probability measure on the sample space by assigning a subset of the sample space the probability of its inverse image in the state space. In other words the probability distribution of a random variable is the push forward measure of the probability distribution on the state space.

More formally, given a random variable  between a probability space

between a probability space  , the sample space, and a measurable space (Y,Σ), called the state space, a probability distribution on (Y, Σ) is a probability measure

, the sample space, and a measurable space (Y,Σ), called the state space, a probability distribution on (Y, Σ) is a probability measure ![X_{*}P: \Sigma \rightarrow [0,1]](http://upload.wikimedia.org/math/0/7/1/0710d059da676774278fd4a3ceb3c740.png) on the state space where X * P is the push forward measure of P.

on the state space where X * P is the push forward measure of P.

[edit] Probability distributions of real-valued random variables

Because a probability distribution Pr on the real line is determined by the probability of being in a half-open interval Pr(a, b], the probability distribution of a real-valued random variable X is completely characterized by its cumulative distribution function:

[edit] Discrete probability distribution

A probability distribution is called discrete if its cumulative distribution function only increases in jumps. More precisely, a probability distribution is discrete if there is a finite or countable set whose probability is 1.

For many familiar discrete distributions, the set of possible values is topologically discrete in the sense that all its points are isolated points. But, there are discrete distributions for which this countable set is dense on the real line.

Discrete distributions are characterized by a probability mass function, p such that

[edit] Continuous probability distribution

By one convention, a probability distribution is called continuous if its cumulative distribution function is continuous, which means that it belongs to a random variable X for which Pr[ X = x ] = 0 for all x in R.

Another convention reserves the term continuous probability distribution for absolutely continuous distributions. These distributions can be characterized by a probability density function: a non-negative Lebesgue integrable function f defined on the real numbers such that

Discrete distributions and some continuous distributions (like the devil's staircase) do not admit such a density.

[edit] Terminology

The support of a distribution is the smallest closed interval/set whose complement has probability zero. It may be understood as the points or elements that are actual members of the distribution.

A discrete random variable is a random variable whose probability distribution is discrete. Similarly, a continuous random variable is a random variable whose probability distribution is continuous.

[edit] Some properties

- The probability density function of the sum of two independent random variables is the convolution of each of their density functions.

- The probability density function of the difference of two independent random variables is the cross-correlation of their density functions.

- Probability distributions are not a vector space – they are not closed under linear combinations, as these do not preserve non-negativity or total integral 1 – but they are closed under convex combination, thus forming a convex subset of the space of functions (or measures).

[edit] List of probability distributions

[edit] See also

[edit] Notes

- ^ B.S. Everitt. 2006. The Cambridge Dictionary of Statistics, Third Edition. pp. 313–314. Cambridge University Press, Cambridge. ISBN 0521690277

[edit] External links

| Wikimedia Commons has media related to: Probability distribution |

- Interactive Discrete and Continuous Probability Distributions

- A Compendium of Common Probability Distributions

- Statistical Distributions - Overview

- Probability Distributions in Quant Equation Archive, sitmo

- A Probability Distribution Calculator

- Distribution Explorer a mixed C++ and C# Windows application that allows you to explore the properties of 20+ statistical distributions, and calculate CDF, PDF & quantiles. Written using open-source C++ from the Boost Math Toolkit library.

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||

![F(x) = \Pr \left[ X \le x \right] \qquad \forall x \in \mathbb{R}.](http://upload.wikimedia.org/math/6/e/5/6e5ecb80a21894d0b63c8b60156258da.png)

![\Pr \left[X = x \right] = p(x).](http://upload.wikimedia.org/math/d/1/2/d124a35cf09eac55a869e9c1bfa23e18.png)

![F(x) = \Pr \left[X \le x \right] = \int_{-\infty}^x f(t)\,dt.](http://upload.wikimedia.org/math/c/b/b/cbbec8b45d4b7678dc6bf20f1ed73a0c.png)