AdaBoost

From Wikipedia, the free encyclopedia

AdaBoost, short for Adaptive Boosting, is a machine learning algorithm, formulated by Yoav Freund and Robert Schapire. It is a meta-algorithm, and can be used in conjunction with many other learning algorithms to improve their performance. AdaBoost is adaptive in the sense that subsequent classifiers built are tweaked in favor of those instances misclassified by previous classifiers. AdaBoost is sensitive to noisy data and outliers. Otherwise, it is less susceptible to the overfitting problem than most learning algorithms.

AdaBoost calls a weak classifier repeatedly in a series of rounds  . For each call a distribution of weights Dt is updated that indicates the importance of examples in the data set for the classification. On each round, the weights of each incorrectly classified example are increased (or alternatively, the weights of each correctly classified example are decreased), so that the new classifier focuses more on those examples.

. For each call a distribution of weights Dt is updated that indicates the importance of examples in the data set for the classification. On each round, the weights of each incorrectly classified example are increased (or alternatively, the weights of each correctly classified example are decreased), so that the new classifier focuses more on those examples.

Contents |

[edit] The algorithm for the binary classification task

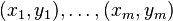

Given:  where

where

Initialise

For  :

:

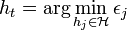

- Find the classifier

that minimizes the error with respect to the distribution Dt:

that minimizes the error with respect to the distribution Dt:

, where

, where ![\epsilon_{j} = \sum_{i=1}^{m} D_{t}(i)[y_i \ne h_{j}(x_{i})]](http://upload.wikimedia.org/math/4/4/d/44d8d3c98bbcb6f1c0d015334906fb77.png)

- Prerequisite: εt < 0.5, otherwise stop.

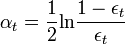

- Choose

, typically

, typically  where εt is the weighted error rate of classifier ht.

where εt is the weighted error rate of classifier ht. - Update:

where Zt is a normalization factor (chosen so that Dt + 1 will be a probability distribution, i.e. sum one over all x).

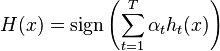

Output the final classifier:

The equation to update the distribution Dt is constructed so that:

Thus, after selecting an optimal classifier  for the distribution

for the distribution  , the examples

, the examples  that the classifier

that the classifier  identified correctly are weighted less and those that it identified incorrectly are weighted more. Therefore, when the algorithm is testing the classifiers on the distribution

identified correctly are weighted less and those that it identified incorrectly are weighted more. Therefore, when the algorithm is testing the classifiers on the distribution  , it will select a classifier that better identifies those examples that the previous classifer missed.

, it will select a classifier that better identifies those examples that the previous classifer missed.

[edit] Statistical Understanding of Boosting

Boosting can be seen as minimization of a convex loss function over a convex set of functions. [1] Specifically, the loss being minimized is the exponential loss

and we are seeking a function

| f = | ∑ | αtht |

| t |

[edit] See also

[edit] References

- ^ T. Zhang, "Convex Risk Minimization", Annals of Statistics, 2004.

[edit] External links

- Adaboost in C++, an implementation of Adaboost in C++ and boost

- Boosting.org, a site on boosting and related ensemble learning methods

- JBoost, a site offering a classification and visualization package, implementing AdaBoost among other boosting algorithms.

- AdaBoost Presentation summarizing Adaboost (see page 4 for an illustrated example of performance)

- A Short Introduction to Boosting Introduction to Adaboost by Freund and Schapire from 1999

- A decision-theoretic generalization of on-line learning and an application to boosting Journal of Computer and System Sciences, no. 55. 1997 (Original paper of Yoav Freund and Robert E.Schapire where Adaboost is first introduced.)

- An applet demonstrating AdaBoost

- Ensemble Based Systems in Decision Making, R. Polikar, IEEE Circuits and Systems Magazine, vol.6, no.3, pp. 21-45, 2006. A tutorial article on ensemble systems including pseudocode, block diagrams and implementation issues for AdaBoost and other ensemble learning algorithms.

- A Matlab Implementation of AdaBoost

- Additive logistic regression: a statistical view of boosting by Jerome Friedman, Trevor Hastie, Robert Tibshirani. Paper introducing probabilistic theory for AdaBoost, and introducing GentleBoost

- OpenCV implementation of several boosting variants