Covariance matrix

From Wikipedia, the free encyclopedia

In statistics and probability theory, the covariance matrix is a matrix of covariances between elements of a vector. It is the natural generalization to higher dimensions of the concept of the variance of a scalar-valued random variable.

Contents |

[edit] Definition

If entries in the column vector

are random variables, each with finite variance, then the covariance matrix Σ is the matrix whose (i, j) entry is the covariance

where

is the expected value of the ith entry in the vector X. In other words, we have

The inverse of this matrix, Σ − 1, is called the inverse covariance matrix or the precision matrix.[1]

[edit] Generalization of the variance

The definition above is equivalent to the matrix equality

This form can be seen as a generalization of the scalar-valued variance to higher dimensions. Recall that for a scalar-valued random variable X

where

[edit] Conflicting nomenclatures and notations

Nomenclatures differ. Some statisticians, following the probabilist William Feller, call this matrix the variance of the random vector X, because it is the natural generalization to higher dimensions of the 1-dimensional variance. Others call it the covariance matrix, because it is the matrix of covariances between the scalar components of the vector X. Thus

However, the notation for the cross-covariance between two vectors is standard:

The var notation is found in William Feller's two-volume book An Introduction to Probability Theory and Its Applications, but both forms are quite standard and there is no ambiguity between them.

The matrix Σ is also often called the variance-covariance matrix since the diagonal terms are in fact variances.

[edit] Properties

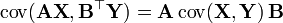

For ![\Sigma=\mathrm{E} \left[ \left( \textbf{X} - \mathrm{E}[\textbf{X}] \right) \left( \textbf{X} - \mathrm{E}[\textbf{X}] \right)^\top \right]](http://upload.wikimedia.org/math/1/8/1/1811f942680a72974597be42c28d31d2.png) and

and  , where X is a random p-dimensional variable and Y a random q-dimensional variable, the following basic properties apply:

, where X is a random p-dimensional variable and Y a random q-dimensional variable, the following basic properties apply:

is positive semi-definite

is positive semi-definite

- If p = q, then

- If

and

and  are independent, then

are independent, then

where  and

and  are random

are random  vectors,

vectors,  is a random

is a random  vector,

vector,  is

is  vector,

vector,  and

and  are

are  matrices.

matrices.

This covariance matrix is a useful tool in many different areas. From it a transformation matrix can be derived that allows one to completely decorrelate the data or, from a different point of view, to find an optimal basis for representing the data in a compact way (see Rayleigh quotient for a formal proof and additional properties of covariance matrices). This is called principal components analysis (PCA) and Karhunen-Loève transform (KL-transform).

[edit] As a linear operator

Applied to one vector, the covariance matrix maps a linear combination, c, of the random variables, X, onto a vector of covariances with those variables:  . Treated as a 2-form, it yields the covariance between the two linear combinations:

. Treated as a 2-form, it yields the covariance between the two linear combinations:  . The variance of a linear combination is then

. The variance of a linear combination is then  , its covariance with itself.

, its covariance with itself.

[edit] Which matrices are covariance matrices?

From the identity just above (let  be a

be a  real-valued vector)

real-valued vector)

the fact that the variance of any real-valued random variable is nonnegative, and the symmetry of the covariance matrix's definition it follows that only a positive semi-definite symmetric matrix can be a covariance matrix. The answer to the converse question, whether every positive semi-definite symmetric matrix is a covariance matrix, is "yes." To see this, suppose M is a p×p nonnegative-definite symmetric matrix. From the finite-dimensional case of the spectral theorem, it follows that M has a nonnegative symmetric square root, which let us call M1/2. Let  be any p×1 column vector-valued random variable whose covariance matrix is the p×p identity matrix. Then

be any p×1 column vector-valued random variable whose covariance matrix is the p×p identity matrix. Then

[edit] How to find a valid covariance matrix?

In some applications (e.g. building data models from only partially observed data) one want to find the “nearest” covariance matrix to a given symmetric matrix (e.g. of observed covariances). In 2002, Higham[2] formalized the notion of nearness using a weighted Frobenius norm and provided a method for computing the nearest covariance matrix.

[edit] Complex random vectors

The variance of a complex scalar-valued random variable with expected value μ is conventionally defined using complex conjugation:

where the complex conjugate of a complex number z is denoted z * .

If Z is a column-vector of complex-valued random variables, then we take the conjugate transpose by both transposing and conjugating, getting a square matrix:

where Z * denotes the conjugate transpose, which is applicable to the scalar case since the transpose of a scalar is still a scalar.

[edit] Estimation

The derivation of the maximum-likelihood estimator of the covariance matrix of a multivariate normal distribution is perhaps surprisingly subtle. See estimation of covariance matrices.

[edit] Probability density function

The probability density function of a set of n correlated random variables, the joint probability function of which is a n-order Gaussian vector, is given on the Maximum likelihood page.

[edit] See also

- Estimation of covariance matrices

- Multivariate statistics

- Sample covariance matrix

- Gramian matrix

- eigenvalue decomposition

[edit] Notes

- ^ Wasserman, Larry (2004). All of Statistics: A Concise Course in Statistical Inference.

- ^ Higham, Nicholas J.. "Computing the nearest correlation matrix—a problem from finance". IMA Journal of Numerical Analysis 22 (3): 329-343. doi:10.1093/imanum/22.3.329.

[edit] References

- Eric W. Weisstein, Covariance Matrix at MathWorld.

- N.G. van Kampen, Stochastic processes in physics and chemistry. New York: North-Holland, 1981.

![\Sigma

= \begin{bmatrix}

\mathrm{E}[(X_1 - \mu_1)(X_1 - \mu_1)] & \mathrm{E}[(X_1 - \mu_1)(X_2 - \mu_2)] & \cdots & \mathrm{E}[(X_1 - \mu_1)(X_n - \mu_n)] \\ \\

\mathrm{E}[(X_2 - \mu_2)(X_1 - \mu_1)] & \mathrm{E}[(X_2 - \mu_2)(X_2 - \mu_2)] & \cdots & \mathrm{E}[(X_2 - \mu_2)(X_n - \mu_n)] \\ \\

\vdots & \vdots & \ddots & \vdots \\ \\

\mathrm{E}[(X_n - \mu_n)(X_1 - \mu_1)] & \mathrm{E}[(X_n - \mu_n)(X_2 - \mu_2)] & \cdots & \mathrm{E}[(X_n - \mu_n)(X_n - \mu_n)]

\end{bmatrix}.](http://upload.wikimedia.org/math/5/8/5/58572fa5b05e778f5a5eff9ec1b3ddb6.png)

![\sigma^2 = \mathrm{var}(X)

= \mathrm{E}[(X-\mu)^2], \,](http://upload.wikimedia.org/math/3/c/6/3c62f04e5ee373e5776087205ac06ca9.png)

![\operatorname{var}(\textbf{X})

=

\operatorname{cov}(\textbf{X})

=

\mathrm{E}

\left[

(\textbf{X} - \mathrm{E} [\textbf{X}])

(\textbf{X} - \mathrm{E} [\textbf{X}])^\top

\right].](http://upload.wikimedia.org/math/f/8/c/f8cb85080acf88e54c194ad48bab8527.png)

![\operatorname{cov}(\textbf{X},\textbf{Y})

=

\mathrm{E}

\left[

(\textbf{X} - \mathrm{E}[\textbf{X}])

(\textbf{Y} - \mathrm{E}[\textbf{Y}])^\top

\right].](http://upload.wikimedia.org/math/5/3/9/5393cfe54a257747ea8335e92d4ce5ee.png)

![\operatorname{var}(z)

=

\operatorname{E}

\left[

(z-\mu)(z-\mu)^{*}

\right]](http://upload.wikimedia.org/math/4/d/b/4db5552832ee20b19f0ef057887f2162.png)

![\operatorname{E}

\left[

(Z-\mu)(Z-\mu)^{*}

\right]](http://upload.wikimedia.org/math/f/e/6/fe608f7ac94446dffb6af34289617255.png)