From Wikipedia, the free encyclopedia

CUDA (Compute Unified Device Architecture) is a parallel computing architecture developed by NVIDIA. Simply put, CUDA is the computing engine in NVIDIA graphics processing units or GPUs, that is accessible to software developers through industry standard programming languages. Programmers use 'C for CUDA', compiled through a PathScale Open64 C compiler,[1] to code algorithms for execution on the GPU. CUDA architecture supports all computational interfaces through including C. Third party wrappers are also available for Python, Fortran and Java.

The latest drivers all contain the necessary CUDA components. CUDA works with all NVIDIA GPUs from the G8X series onwards, including GeForce, Quadro and the Tesla line. NVIDIA states that programs developed for the GeForce 8 series will also work without modification on all future Nvidia video cards, due to binary compatibility. CUDA gives developers access to the native instruction set and memory of the parallel computational elements in CUDA GPUs. Using CUDA, the latest NVIDIA GPUs effectively become open architectures like CPUs. Unlike CPUs however, GPUs have a parallel "many-core" architecture, each core capable of running thousands of threads simultaneously - if an application is suited to this kind of an architecture, the GPU can offer large performance benefits.

In the computer gaming industry, in addition to graphics rendering, graphics cards are used in game physics calculations (physical effects like debris, smoke, fire, fluids), an example being PhysX and Bullet (software). CUDA has also been used to accelerate non-graphical applications in computational biology, cryptography and other fields by an order of magnitude or more.[2][3][4][5] An example of this is the BOINC distributed computing client.[6]

CUDA provides both a low level API and a higher level API. The initial CUDA SDK was made public 15 February 2007. NVIDIA has released versions of the CUDA API for Microsoft Windows and Linux. Mac OS X was also added as a fully supported platform in version 2.0[7], which supersedes the beta released February 14, 2008.[8]

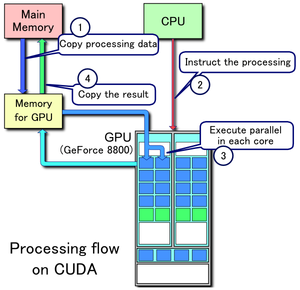

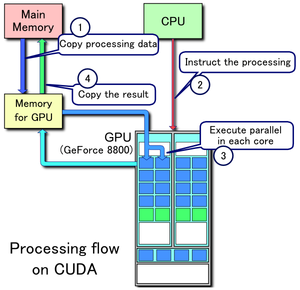

Example of CUDA processing flow

1. Copy data from main mem to GPU mem

2. CPU instructs the process to GPU

3. GPU execute parallel in each core

4. Copy the result from GPU mem to main mem

[edit] Advantages

CUDA has several advantages over traditional general purpose computation on GPUs (GPGPU) using graphics APIs.

- Scattered reads – code can read from arbitrary addresses in memory.

- Shared memory – CUDA exposes a fast shared memory region (16KB in size) that can be shared amongst threads. This can be used as a user-managed cache, enabling higher bandwidth than is possible using texture lookups.[9]

- Faster downloads and readbacks to and from the GPU

- Full support for integer and bitwise operations, including integer texture lookups.

[edit] Limitations

- It uses a recursion-free, function-pointer-free subset of the C language, plus some simple extensions. However, a single process must run spread across multiple disjoint memory spaces, unlike other C language runtime environments.

- Texture rendering is not supported.

- Recursive functions are not supported and must be converted to loops.

- For double precision there are no deviations from the IEEE 754 standard. In single precision, Denormals and signalling NaNs are not supported; only two IEEE rounding modes are supported (chop and round-to-nearest even), and those are specified on a per-instruction basis rather than in a control word (whether this is a limitation is arguable); and the precision of division/square root is slightly lower than single precision.

- The bus bandwidth and latency between the CPU and the GPU may be a bottleneck.

- Threads should be run in groups of at least 32 for best performance, with total number of threads numbering in the thousands. Branches in the program code do not impact performance significantly, provided that each of 32 threads takes the same execution path; the SIMD execution model becomes a significant limitation for any inherently divergent task (e.g., traversing a ray tracing acceleration data structure).

- CUDA-enabled GPUs are only available from NVIDIA (GeForce 8 series and above, Quadro and Tesla).[10]

[edit] Supported GPUs

A table of devices officially supporting CUDA (Note that many applications require at least 256 MB of dedicated VRAM).[11]

| Nvidia GeForce |

| GeForce GTX 295 |

| GeForce GTX 285 |

| GeForce GTX 280 |

| GeForce GTX 275 |

| GeForce GTX 260 |

| GeForce GTS 250 |

| GeForce 9800 GX2 |

| GeForce 9800 GTX+ |

| GeForce 9800 GTX |

| GeForce 9800 GT |

| GeForce 9600 GSO |

| GeForce 9600 GT |

| GeForce 9500 GT |

| GeForce 9400 GT |

| GeForce 9400 mGPU |

| GeForce 9300 mGPU |

| GeForce 8800 Ultra |

| GeForce 8800 GTX |

| GeForce 8800 GTS |

| GeForce 8800 GT |

| GeForce 8800 GS |

| GeForce 8600 GTS |

| GeForce 8600 GT |

| GeForce 8500 GT |

| GeForce 8400 GS |

| GeForce 8300 mGPU |

| GeForce 8200 mGPU |

| GeForce 8100 mGPU |

|

| Nvidia GeForce Mobile |

| GeForce 9800M GTX |

| GeForce 9800M GTS |

| GeForce 9800M GT |

| GeForce 9700M GTS |

| GeForce 9700M GT |

| GeForce 9650M GS |

| GeForce 9600M GT |

| GeForce 9600M GS |

| GeForce 9500M GS |

| GeForce 9500M G |

| GeForce 9400M G |

| GeForce 9300M GS |

| GeForce 9300M G |

| GeForce 9200M GS |

| GeForce 9100M G |

| GeForce 8800M GTS |

| GeForce 8700M GT |

| GeForce 8600M GT |

| GeForce 8600M GS |

| GeForce 8400M GT |

| GeForce 8400M GS |

| GeForce 8400M G |

| GeForce 8200M G |

|

| Nvidia Quadro |

| Quadro FX 5800 |

| Quadro FX 5600 |

| Quadro FX 4800 |

| Quadro FX 4700 X2 |

| Quadro FX 4600 |

| Quadro FX 3700 |

| Quadro FX 1700 |

| Quadro FX 570 |

| Quadro FX 370 |

| Quadro NVS 290 |

| Quadro FX 3600M |

| Quadro FX 1600M |

| Quadro FX 770M |

| Quadro FX 570M |

| Quadro FX 370M |

| Quadro Plex 1000 Model IV |

| Quadro Plex 1000 Model S4 |

| Nvidia Tesla |

| Tesla S1070 |

| Tesla C1060 |

| Tesla C870 |

| Tesla D870 |

| Tesla S870 |

|

See the Comparison of Nvidia graphics processing units for more information.

[edit] Example

This example code in C++ loads a texture from an image into an array on the GPU:

cudaArray* cu_array;

texture<float, 2> tex;

// Allocate array

cudaMalloc(&cu_array, cudaCreateChannelDesc<float>(), width, height);

// Copy image data to array

cudaMemcpy(cu_array, image, width*height, cudaMemcpyHostToDevice);

// Bind the array to the texture

cudaBindTexture(tex, cu_array);

// Run kernel

dim3 blockDim(16, 16, 1);

dim3 gridDim(width / blockDim.x, height / blockDim.y, 1);

kernel<<< gridDim, blockDim, 0 >>>(d_odata, width, height);

cudaUnbindTexture(tex);

__global__ void kernel(float* odata, int height, int width)

{

unsigned int x = blockIdx.x*blockDim.x + threadIdx.x;

unsigned int y = blockIdx.y*blockDim.y + threadIdx.y;

float c = texfetch(tex, x, y);

odata[y*width+x] = c;

}

Below is an example given in Python that computes the product of two arrays on the GPU. The python language bindings can be obtained from PyCUDA.

import pycuda.driver as drv

import numpy

drv.init()

dev = drv.Device(0)

ctx = dev.make_context()

mod = drv.SourceModule("""

__global__ void multiply_them(float *dest, float *a, float *b)

{

const int i = threadIdx.x;

dest[i] = a[i] * b[i];

}

""")

multiply_them = mod.get_function("multiply_them")

a = numpy.random.randn(400).astype(numpy.float32)

b = numpy.random.randn(400).astype(numpy.float32)

dest = numpy.zeros_like(a)

multiply_them(

drv.Out(dest), drv.In(a), drv.In(b),

block=(400,1,1))

print dest-a*b

[edit] See also

[edit] References

- ^ NVIDIA on DailyTech

- ^ Giorgos Vasiliadis, Spiros Antonatos, Michalis Polychronakis, Evangelos P. Markatos and Sotiris Ioannidis (September 2008, Boston, MA, USA). "Gnort: High Performance Network Intrusion Detection Using Graphics Processors" (PDF). Proceedings of the 11th International Symposium On Recent Advances In Intrusion Detection (RAID). http://www.ics.forth.gr/dcs/Activities/papers/gnort.raid08.pdf.

- ^ Schatz, M.C., Trapnell, C., Delcher, A.L., Varshney, A. (2007). "High-throughput sequence alignment using Graphics Processing Units.". BMC Bioinformatics 8:474: 474. doi:10.1186/1471-2105-8-474. http://www.biomedcentral.com/1471-2105/8/474.

- ^ Manavski, Svetlin A.; Giorgio Valle (2008). "CUDA compatible GPU cards as efficient hardware accelerators for Smith-Waterman sequence alignment". BMC Bioinformatics 9(Suppl 2):S10: S10. doi:10.1186/1471-2105-9-S2-S10. http://www.biomedcentral.com/1471-2105/9/S2/S10.

- ^ Pyrit - Google Code http://code.google.com/p/pyrit/

- ^ Use your NVIDIA GPU for scientific computing, BOINC official site (December 18 2008)

- ^ NVIDIA CUDA Software Development Kit (CUDA SDK) - Release Notes Version 2.0 for MAC OSX

- ^ CUDA 1.1 - Now on Mac OS X- (Posted on Feb 14, 2008)

- ^ Silberstein, Mark (2007). "Efficient computation of Sum-products on GPUs" (PDF). http://www.technion.ac.il/~marks/docs/SumProductPaper.pdf.

- ^ "CUDA-Enabled Products". CUDA Zone. NVIDIA Corporation. http://www.nvidia.com/object/cuda_learn_products.html. Retrieved on 2008-11-03.

- ^ CUDA-Enabled GPU Products

[edit] External links

|

Nvidia |

|

| GPUs |

|

Early chipsets

|

|

|

|

RIVA Series

|

|

|

|

|

|

|

|

Technologies

|

|

|

|

| Chipsets |

|

GeForce Series

|

|

|

|

nForce Series

|

|

|

|

Technologies

|

|

|

|

| Workstation and HPC |

|

|

| Consoles |

|

|

| Handheld |

|

|

Driver and software

technologies |

|

|

| Acquisitions |

|

|