Quantum decoherence

From Wikipedia, the free encyclopedia

| This article may be confusing or unclear to readers. Please help clarify the article; suggestions may be found on the talk page. (April 2007) |

In quantum mechanics, quantum decoherence is the mechanism by which quantum systems interact with their environments to exhibit probabilistically additive behavior. Quantum decoherence gives the appearance of wave function collapse and justifies the framework and intuition of classical physics as an acceptable approximation: decoherence is the mechanism by which the classical limit emerges out of a quantum starting point and it determines the location of the quantum-classical boundary. Decoherence occurs when a system interacts with its environment in a thermodynamically irreversible way. This prevents different elements in the quantum superposition of the system+environment's wavefunction from interfering with each other. Decoherence has been a subject of active research for the last two decades.[1]

Decoherence can be viewed as the loss of information from a system into the environment (often modeled as a heat bath).[2] Viewed in isolation, the system's dynamics are non-unitary (although the combined system plus environment evolves in a unitary fashion).[3] Thus the dynamics of the system alone, treated in isolation from the environment, are irreversible. As with any coupling, entanglements are generated between the system and environment.

A quantum state is a superposition of other quantum states, for instance, the spin states of an electron. In the Copenhagen interpretation, the superposition of states was described by a wave function, and the wave function collapse was given the name decoherence. Today, the decoherence program studies quantum correlations between the states of a quantum system and its environment. But the original sense remains, decoherence refers to the untangling of quantum states to produce a single macroscopic reality.[1]

Decoherence does not generate actual wave function collapse. It only provides an explanation for the appearance of wavefunction collapse. The quantum nature of the system is simply "leaked" into the environment. A total superposition of the universal wavefunction still occurs, but its ultimate fate remains an interpretational issue.

Decoherence represents a major problem for the practical realization of quantum computers, since these rely heavily on the undisturbed evolution of quantum coherences.

Contents |

[edit] Mechanisms

Decoherence is not a new theoretical concept, but instead a set of new perspectives in which the environment is no longer ignored in modeling systems. To examine how decoherence operates, an "intuitive" model is presented. The model requires some familiarity with quantum theory basics. Analogies are made between visualisable classical phase spaces and Hilbert spaces. A more rigorous derivation in Dirac notation shows how decoherence destroys interference effects and the "quantum nature" of systems. Next, the density matrix approach is presented for perspective.

[edit] Phase space picture

An N-particle system can be represented in non-relativistic quantum mechanics by a wavefunction, ψ(x1,x2,...,xN). This has analogies with the classical phase space. A classical phase space contains a real-valued function in 6N dimensions (each particle contributes 3 spatial coordinates and 3 momenta). Our "quantum" phase space conversely contains a complex-valued function in a 3N dimensional space. The position and momenta do not commute but can still inherit much of the mathematical structure of a Hilbert space. Aside from these differences, however, the analogy holds.

Different previously-isolated, non-interacting systems occupy different phase spaces. Alternatively we can say they occupy different, lower-dimensional subspaces in the phase space of the joint system. The effective dimensionality of a system's phase space is the number of degrees of freedom present which—in non-relativistic models—is 6 times the number of a system's free particles. For a macroscopic system this will be a very large dimensionality. When two systems (and the environment would be a system) start to interact, though, their associated state vectors are no longer constrained to the subspaces. Instead the combined state vector time-evolves a path through the "larger volume", whose dimensionality is the sum of the dimensions of the two subspaces. A square (2-d surface) extended by just one dimension (a line) forms a cube. The cube has a greater volume, in some sense, than its component square and line axes. The extent two vectors interfere with each other is a measure of how "close" they are to each other (formally, their overlap or Hilbert space scalar product together) in the phase space. When a system couples to an external environment, the dimensionality of, and hence "volume" available to, the joint state vector increases enormously. Each environmental degree of freedom contributes an extra dimension.

The original system's wavefunction can be expanded arbitrarily as a sum of elements in a quantum superposition. Each expansion corresponds to a projection of the wave vector onto a basis. The bases can be chosen at will. Let us choose any expansion where the resulting elements interact with the environment in an element-specific way. Such elements will—with overwhelming probability—be rapidly separated from each other by their natural unitary time evolution along their own independent paths. After a very short interaction, there is almost no chance of any further interference. The process is effectively irreversible. The different elements effectively become "lost" from each other in the expanded phase space created by coupling with the environment. The original elements are said to have decohered. The environment has effectively selected out those expansions or decompositions of the original state vector that decohere (or lose phase coherence) with each other. This is called "environmentally-induced-superselection", or einselection.[4] The decohered elements of the system no longer exhibit quantum interference between each other, as in a double-slit experiment. Any elements that decohere from each other via environmental interactions are said to be quantum entangled with the environment. The converse is not true: not all entangled states are decohered from each other.

Any measuring device or apparatus acts as an environment since, at some stage along the measuring chain, it has to be large enough to be read by humans. It must possess a very large number of hidden degrees of freedom. In effect, the interactions may be considered to be quantum measurements. As a result of an interaction, the wave functions of the system and the measuring device become entangled with each other. Decoherence happens when different portions of the system's wavefunction become entangled in different ways with the measuring device. For two einselected elements of the entangled system's state to interfere, both the original system and the measuring in both elements device must significantly overlap, in the scalar product sense. If the measuring device has many degrees of freedom, it is very unlikely for this to happen.

As a consequence, the system behaves as a classical statistical ensemble of the different elements rather than as a single coherent quantum superposition of them. From the perspective of each ensemble member's measuring device, the system appears to have irreversibly collapsed onto a state with a precise value for the measured attributes, relative to that element.

[edit] Dirac notation

Using the Dirac notation, let the system initially be in the state  where

where

where the  s form an einselected basis (environmentally induced selected eigen basis[4]); and let the environment initially be in the state

s form an einselected basis (environmentally induced selected eigen basis[4]); and let the environment initially be in the state  . The vector basis of the total combined system and environment can be formed by tensor multiplying the basis vectors of the subsystems together. Thus, before any interaction between the two subsystems, the joint state can be written as:

. The vector basis of the total combined system and environment can be formed by tensor multiplying the basis vectors of the subsystems together. Thus, before any interaction between the two subsystems, the joint state can be written as:

There are two extremes in the way the system can interact with its environment: either (1) the system loses its distinct identity and merges with the environment (e.g. photons in a cold, dark cavity get converted into molecular excitations within the cavity walls), or (2) the system is not disturbed at all, even though the environment is disturbed (e.g. the idealized non-disturbing measurement). In general an interaction is a mixture of these two extremes, which we shall examine:

[edit] System absorbed by environment

If the environment absorbs the system, each element of the total system's basis interacts with the environment such that:

evolves into

evolves into

and so

evolves into

evolves into

where the unitarity of time-evolution demands that the total state basis remains orthonormal and in particular their scalar or inner products with each other vanish, since  :

:

This orthonormality of the environment states is the defining characteristic required for einselection.[4]

[edit] System not disturbed by environment

This is the idealised measurement/undisturbed system case in which each element of the basis interacts with the environment such that:

evolves into the product

evolves into the product

i.e. the system disturbs the environment, but is itself undisturbed by the environment.

and so:

evolves into

evolves into

where, again, unitarity demands that:

and additionally decoherence requires, by virtue of the large number of hidden degrees of freedom in the environment, that

As before, this is the defining characteristic for decoherence to become einselection.[4] The approximation becomes more exact as the number of environmental degrees of freedom affected increases.

Note that if the system basis  were not an einselected basis then the last condition is trivial since the disturbed environment is not a function of i and we have the trivial disturbed environment basis

were not an einselected basis then the last condition is trivial since the disturbed environment is not a function of i and we have the trivial disturbed environment basis  . This would correspond to the system basis being degenerate with respect to the environmentally-defined-measurement-observable. For a complex environmental interaction (which would be expected for a typical macroscale interaction) a non-einselected basis would be hard to define.

. This would correspond to the system basis being degenerate with respect to the environmentally-defined-measurement-observable. For a complex environmental interaction (which would be expected for a typical macroscale interaction) a non-einselected basis would be hard to define.

[edit] Loss of interference and the transition from quantum to classical

The utility of decoherence lies in its application to the analysis of probabilities, before and after environmental interaction, and in particular to the vanishing of quantum interference terms after decoherence has occurred. If we ask what is the probability of observing the system making a transition or quantum leap from ψ to φ before ψ has interacted with its environment, then application of the Born probability rule states that the transition probability is the modulus squared of the scalar product of the two states:

where  and

and  etc

etc

Terms appear in the expansion of the transition probability above which involve  ; these can be thought of as representing interference between the different basis elements or quantum alternatives. This is a purely quantum effect and represents the non-additivity of the probabilities of quantum alternatives.

; these can be thought of as representing interference between the different basis elements or quantum alternatives. This is a purely quantum effect and represents the non-additivity of the probabilities of quantum alternatives.

To calculate the probability of observing the system making a quantum leap from ψ to φ after ψ has interacted with its environment, then application of the Born probability rule states we must sum over all the relevant possible states of the environment, Ei, before squaring the modulus:

The internal summation vanishes when we apply the decoherence / einselection condition  and the formula simplifies to:

and the formula simplifies to:

If we compare this with the formula we derived before the environment introduced decoherence we can see that the effect of decoherence has been to move the summation sign Σi from inside of the modulus sign to outside. As a result all the cross- or quantum interference-terms:

have vanished from the transition probability calculation. The decoherence has irreversibly converted quantum behaviour (additive probability amplitudes) to classical behaviour (additive probabilities).[5][4][6]

In terms of density matrices, the loss of interference effects corresponds to the diagonalization of the "environmentally traced over" density matrix.[4]

[edit] Density matrix approach

The effect of decoherence on density matrices is essentially the decay or rapid vanishing of the off-diagonal elements of the partial trace of the joint system's density matrix, i.e. the trace, with respect to any environmental basis, of the density matrix of the combined system and its environment. The decoherence irreversibly converts the "averaged" or "environmentally traced over"[4] density matrix from a pure state to a reduced mixture; it is this that gives the appearance of wavefunction collapse. Again this is called "environmentally-induced-superselection", or einselection.[4] The advantage of taking the partial trace is that this procedure is indifferent to the environmental basis chosen.

[edit] Operator-sum representation

Consider a system S and environment (bath) B, which are closed and can be treated quantum mechanically. Let  and

and  be the system's and bath's Hilbert spaces, respectively. Then the Hamiltonian for the combined system is

be the system's and bath's Hilbert spaces, respectively. Then the Hamiltonian for the combined system is

where  are the system and bath Hamiltonians, respecitvely, and

are the system and bath Hamiltonians, respecitvely, and  is the interaction Hamiltonian between the system and bath, and

is the interaction Hamiltonian between the system and bath, and  are the identity operators on the system and bath Hilbert spaces, respectively. The time-evolution of the density operator of this closed system is unitary and, as such, is given by

are the identity operators on the system and bath Hilbert spaces, respectively. The time-evolution of the density operator of this closed system is unitary and, as such, is given by

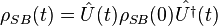

where the unitary operator is  . If the system and bath are not entangled initially, then we can write

. If the system and bath are not entangled initially, then we can write  . Therefore, the evolution of the system becomes

. Therefore, the evolution of the system becomes

The system-bath interaction Hamiltonian can be written in a general form as

where  is the operator acting on the combined system-bath Hilbert space, and

is the operator acting on the combined system-bath Hilbert space, and  are the operators that act on the system and bath, respectively. This coupling of the system and bath is the cause of decoherence in the system alone. To see this, a partial trace is performed over the bath to give a description of the system alone:

are the operators that act on the system and bath, respectively. This coupling of the system and bath is the cause of decoherence in the system alone. To see this, a partial trace is performed over the bath to give a description of the system alone:

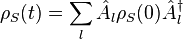

is called the reduced density matrix and gives information about the system only. If the bath is written in terms of its set of orthogonal basis kets, that is, if it has been initially diagonalized then

is called the reduced density matrix and gives information about the system only. If the bath is written in terms of its set of orthogonal basis kets, that is, if it has been initially diagonalized then  Computing the partial trace with respect to this (computational)basis gives:

Computing the partial trace with respect to this (computational)basis gives:

where  are defined as the Kraus operators and are represented as

are defined as the Kraus operators and are represented as

This is known as the operator-sum representation (OSR). A condition on the Kraus operators can be obtained by using the fact that  ; this then gives

; this then gives

This restriction determines if decoherence will occur or not in the OSR. In particular, when there is more than one term present in the sum for  then the dynamics of the system will be non-unitary and hence decoherence will take place.

then the dynamics of the system will be non-unitary and hence decoherence will take place.

[edit] Semigroup approach

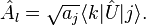

A more general consideration for the existence of decoherence in a quantum system is given by the master equation, which determines how the density matrix of the system alone evolves in time. This uses the Schroedinger picture, where evolution of the state (represented by it's density matrix) is considered. The master equation is:

where  is the system Hamiltonian,

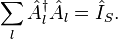

is the system Hamiltonian,  , along with a (possible) unitary contribution from the bath, Δ and LD is the Linblad decohering term.[3] The Linblad decohering term is represented as

, along with a (possible) unitary contribution from the bath, Δ and LD is the Linblad decohering term.[3] The Linblad decohering term is represented as

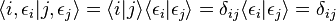

The  are basis operators for the M-dimensional space of bounded operators that act on the system Hilbert space

are basis operators for the M-dimensional space of bounded operators that act on the system Hilbert space  -these are the error generators[7]-and

-these are the error generators[7]-and  represent the elements of a positive semi-definite Hermitian matrix-these matrix elements characterize the decohering processes and, as such, are called the noise parameters[7]. The semigroup approach is particularly nice, because it distinguishes between the unitary and decohering(non-unitary) processes, which is not the case with the OSR. In particular, the non-unitary dynamics are represented by LD, whereas the unitary dynamics of the state are represented by the usual Heisenberg commutator. Note that when

represent the elements of a positive semi-definite Hermitian matrix-these matrix elements characterize the decohering processes and, as such, are called the noise parameters[7]. The semigroup approach is particularly nice, because it distinguishes between the unitary and decohering(non-unitary) processes, which is not the case with the OSR. In particular, the non-unitary dynamics are represented by LD, whereas the unitary dynamics of the state are represented by the usual Heisenberg commutator. Note that when ![L_{D}\big[\rho_{S}(t)\big] =0](http://upload.wikimedia.org/math/d/b/f/dbf01a5a16d0d528a0003c788537a091.png) , the dynamical evolution of the system is unitary. The conditions for the evolution of the system density matrix to be described by the master equation are:

, the dynamical evolution of the system is unitary. The conditions for the evolution of the system density matrix to be described by the master equation are:

- (1) the evolution of the system density matrix is determined by a one-parameter semigroup

- (2) the evolution is "completely positive" (i.e. probabilities are preserved)

- (3) the system and bath density matrices are initially decoupled.[3]

[edit] Examples of non-unitary modelling of decoherence

Decoherence can be modelled as a non-unitary process by which a system couples with its environment (although the combined system plus environment evolves in a unitary fashion).[3] Thus the dynamics of the system alone, treated in isolation, are non-unitary and, as such, are represented by irreversible transformations acting on the system's Hilbert space,  . Since the system's dynamics are represented by irreversible representations, then any information present in the quantum system can be lost to the environment or heat bath). Alternatively, the decay of quantum information caused by the coupling of the system to the environment is referred to as decoherence.[2]Thus decoherence is the process by which information of a quantum system is altered by the system's interaction with its environment (which form a closed system), hence creating an entanglement between the system and heat bath (environment). As such, since the system is entangled with its environment in some unknown way, description of the system by itself cannot be done without also referring to the environment (i.e. without also describing the state of the environment).

. Since the system's dynamics are represented by irreversible representations, then any information present in the quantum system can be lost to the environment or heat bath). Alternatively, the decay of quantum information caused by the coupling of the system to the environment is referred to as decoherence.[2]Thus decoherence is the process by which information of a quantum system is altered by the system's interaction with its environment (which form a closed system), hence creating an entanglement between the system and heat bath (environment). As such, since the system is entangled with its environment in some unknown way, description of the system by itself cannot be done without also referring to the environment (i.e. without also describing the state of the environment).

[edit] Collective dephasing

Consider a system of N qubits that is coupled to a bath symmetrically. Suppose this system of N qubits undergoes a dephasing process, a rotation around the

eigenstates of

eigenstates of  , for example. Then under such a rotation, a random phase, φ, will be created between the eigenstates

, for example. Then under such a rotation, a random phase, φ, will be created between the eigenstates  ,

,  of

of  . Thus these basis qubits

. Thus these basis qubits  and

and  will transform in the following way:

will transform in the following way:

This transformation is permformed by the rotation operator

Since any qubit in this space can be expressed in terms of the basis qubits, then all such qubits will be transformed under this rotation. Consider a qubit in a pure state  . This state will decohere since it is not "encoded" with the dephasing factor

. This state will decohere since it is not "encoded" with the dephasing factor  . This can be seen by examining the density matrix averaged over all values of φ:

. This can be seen by examining the density matrix averaged over all values of φ:

where  is a probability density matrix. If

is a probability density matrix. If  is given as a Gaussian distribution

is given as a Gaussian distribution

then the density matrix is

Since the off-diagonal elements-the coherence terms-decay for increasing α, then the density matrices for the various qubits of the system will be indistinguishable. This means that no measurement can distinguish between the qubits, thus creating decoherence between the various qubit states. In particular, this dephasing process causes the qubits to collapse onto the  axis. This is why this type of decoherence process is called collective dephasing, because the mutual phases between all qubits of the N-qubit system are destroyed.

axis. This is why this type of decoherence process is called collective dephasing, because the mutual phases between all qubits of the N-qubit system are destroyed.

[edit] Depolarizing

Depolarizing is a non-unitary transformation on a quantum system which maps pure states to mixed states. This is a non-unitary process, because any transformation that reverses this process will map states out of their respective Hilbert space thus not preserving positivity (i.e. the original probabilities are mapped to negative probabilities, which is not allowed). The 2-dimensional case of such a transformation would consist of mapping pure states on the surface of the Bloch sphere to mixed states within the Bloch sphere. This would contract the Bloch sphere by some finite amount and the reverse process would expand the Bloch sphere, which cannot happen.

[edit] Dissipation

Dissipation is a decohering process by which the populations of quantum states are changed due to entanglement with a bath. An example of this would be a quantum system that can exchange its energy with a bath through the interaction Hamiltonian. If the system is not in its ground state and the bath is at a temperature lower than that of the system's, then the system will give off energy to the bath and thus higher-energy eigenstates of the system Hamiltonian will decohere to the ground state after cooling and, as such, they will all be non-degenerate. Since the states are no longer degenerate, then they are not distinguishable and thus this process is irreversible (non-unitary).

[edit] Timescales

Decoherence represents an extremely fast process for macroscopic objects, since these are interacting with many microscopic objects, with an enormous number of degrees of freedom, in their natural environment. The process explains why we tend not to observe quantum behaviour in everyday macroscopic objects. It also explains why we do see classical fields emerge from the properties of the interaction between matter and radiation for large amounts of matter. The time taken for off-diagonal components of the density matrix to effectively vanish is called the decoherence time, and is typically extremely short for everyday, macroscale process.

[edit] Measurement

The discontinuous "wave function collapse" postulated in the Copenhagen interpretation to enable the theory to be related to the results of laboratory measurements now can be understood as an aspect of the normal dynamics of quantum mechanics via the decoherence process. Consequently, decoherence is an important part of the modern alternative to the Copenhagen interpretation, based on consistent histories. Decoherence shows how a macroscopic system interacting with a lot of microscopic systems (e.g. collisions with air molecules or photons) moves from being in a pure quantum state—which in general will be a coherent superposition (see Schrödinger's cat)—to being in an incoherent mixture of these states. The weighting of each outcome in the mixture in case of measurement is exactly that which gives the probabilities of the different results of such a measurement.

However, decoherence by itself may not give a complete solution of the measurement problem, since all components of the wave function still exist in a global superposition, which is explicitly acknowledged in the many-worlds interpretation. All decoherence explains, in this view, is why these coherences are no longer available for inspection by local observers. To present a solution to the measurement problem in most interpretations of quantum mechanics, decoherence must be supplied with some nontrivial interpretational considerations (as for example Wojciech Zurek tends to do in his Existential interpretation). However, according to Everett and DeWitt the many-worlds interpretation can be derived from the formalism alone, in which case no extra interpretational layer is required.

[edit] Mathematical details

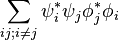

We assume for the moment the system in question consists of a subsystem being studied, A and the "environment" ε, and the total Hilbert space is the tensor product of a Hilbert space describing A, HA and a Hilbert space describing E, Hε: that is,

.

.

This is a reasonably good approximation in the case where A and ε are relatively independent (e.g. there is nothing like parts of A mixing with parts of ε or vice versa). The point is, the interaction with the environment is for all practical purposes unavoidable (e.g. even a single excited atom in a vacuum would emit a photon which would then go off). Let's say this interaction is described by a unitary transformation U acting upon H. Assume the initial state of the environment is  and the initial state of A is the superposition state

and the initial state of A is the superposition state

where  and

and  are orthogonal and there is no entanglement initially. Also, choose an orthonormal basis for HA,

are orthogonal and there is no entanglement initially. Also, choose an orthonormal basis for HA,  . (This could be a "continuously indexed basis" or a mixture of continuous and discrete indexes, in which case we would have to use a rigged Hilbert space and be more careful about what we mean by orthonormal but that's an inessential detail for expository purposes.) Then, we can expand

. (This could be a "continuously indexed basis" or a mixture of continuous and discrete indexes, in which case we would have to use a rigged Hilbert space and be more careful about what we mean by orthonormal but that's an inessential detail for expository purposes.) Then, we can expand

and

uniquely as

and

respectively. One thing to realize is that the environment contains a huge number of degrees of freedom, a good number of them interacting with each other all the time. This makes the following assumption reasonable in a handwaving way, which can be shown to be true in some simple toy models. Assume that there exists a basis for Hε such that  and

and  are all approximately orthogonal to a good degree if i is not j and the same thing for

are all approximately orthogonal to a good degree if i is not j and the same thing for  and

and  and also

and also  and

and  for any i and j (the decoherence property).

for any i and j (the decoherence property).

This often turns out to be true (as a reasonable conjecture) in the position basis because how A interacts with the environment would often depend critically upon the position of the objects in A. Then, if we take the partial trace over the environment, we'd find the density state is approximately described by

(i.e. we have a diagonal mixed state and there is no constructive or destructive interference and the "probabilities" add up classically). The time it takes for U(t) (the unitary operator as a function of time) to display the decoherence property is called the decoherence time.

[edit] Experimental observation

The collapse of a quantum superposition into a single definite state was quantitatively measured for the first time by Serge Haroche and his co-workers at the École Normale Supérieure in Paris in 1996.[8] Their approach involved sending individual rubidium atoms, each in a superposition of two states, through a microwave-filled cavity. The two quantum states both cause shifts in the phase of the microwave field, but by different amounts, so that the field itself is also put into a superposition of two states. As the cavity field exchanges energy with its surroundings, however, its superposition appears to collapse into a single definite state.

Haroche and his colleagues measured the resulting decoherence via correlations between the energy levels of pairs of atoms sent through the cavity with various time delays between the atoms.

[edit] In interpretations of quantum mechanics

Before an understanding of decoherence was developed the Copenhagen interpretation of quantum mechanics treated wavefunction collapse as a fundamental, a priori process. Decoherence provides an explanatory mechanism for the appearance of wavefunction collapse and was first developed by David Bohm in 1952 who applied it to Louis DeBroglie's pilot wave theory, producing Bohmian mechanics[9][10], the first successful hidden variables interpretation of quantum mechanics. Decoherence was then used by Hugh Everett in 1957 to form the core of his many-worlds interpretation[11] . However decoherence was largely[12] ignored for many years, and not until the 1980s [13] [14]/90s did decoherent-based explanations of the appearance of wavefunction collapse become popular, with the greater acceptance of the use of reduced density matrices[5]. The range of decoherent interpretations have subsequently been extended around the idea, such as consistent histories. Some versions of the Copenhagen Interpretation have been rebranded to include decoherence.

Decoherence does not provide a mechanism for the actual wave function collapse; rather it provides a mechanism for the appearance of wavefunction collapse. The quantum nature of the system is simply "leaked" into the environment so that a total superposition of the wavefunction still exists, but exists — at least for all practical purposes[15] — beyond the realm of measurement. Thus decoherence, as a philosophical interpretation, amounts to either the Bohmian mechanics or something similar to the many-worlds approach.[16]

[edit] See also

- Einselection

- Interpretations of Quantum Mechanics

- Partial trace

- Quantum entanglement

- Quantum superposition

- H. Dieter Zeh

- Wojciech Zurek

- Quantum darwinism

- Quantum Zeno effect

- Ghirardi-Rimini-Weber theory

- Objective collapse theory

[edit] References

- ^ a b Decoherence, the measurement problem, and interpretations of quantum mechanics from arXiv

- ^ a b Decoherence, control, and symmetry in quantum computers from arXiv

- ^ a b c d Decoherence-free subspaces and subsystems from arXiv

- ^ a b c d e f g h Wojciech H. Zurek, Decoherence, einselection, and the quantum origins of the classical, Reviews of Modern Physics 2003, 75, 715 or http://arxiv.org/abs/quant-ph/0105127

- ^ a b Wojciech H. Zurek, Decoherence and the transition from quantum to classical, Physics Today, 44, pp 36–44 (1991)

- ^ Wojciech H. Zurek: Decoherence and the Transition from Quantum to Classical—Revisited Los Alamos Science Number 27 2002

- ^ a b Decoherence-free subspaces for quantum computation from arXiv

- ^ Observing the Progressive Decoherence of the “Meter” in a Quantum Measurement from Physical Review Letters 77, 4887 - 4890 (1996) via a website of the American Physical Society

- ^ David Bohm, A Suggested Interpretation of the Quantum Theory in Terms of "Hidden Variables", I, Physical Review, (1952), 84, pp 166–179

- ^ David Bohm, A Suggested Interpretation of the Quantum Theory in Terms of "Hidden Variables", II, Physical Review, (1952), 85, pp 180–193

- ^ Hugh Everett, Relative State Formulation of Quantum Mechanics, Reviews of Modern Physics vol 29, (1957) pp 454–462.

- ^ H. Dieter Zeh, On the Interpretation of Measurement in Quantum Theory, Foundation of Physics, vol. 1, pp. 69-76, (1970).

- ^ Wojciech H. Zurek, Pointer Basis of Quantum Apparatus: Into what Mixture does the Wave Packet Collapse?, Physical Review D, 24, pp. 1516–1525 (1981)

- ^ Wojciech H. Zurek, Environment-Induced Superselection Rules, Physical Review D, 26, pp.1862–1880, (1982)

- ^ Roger Penrose The Road to Reality, p 802-803. "...the environmental-decoherence viewpoint..maintains that state vector reduction [the R process ] can be understood as coming about because the environmental system under consideration becomes inextricably entangled with its environment.[...] We think of the environment as extremely complicated and essentially 'random' [..], accordingly we sum over the unknown states in the environment to obtain a density matrix[...] Under normal circumstances, one must regard the density matrix as as some kind of approximation to the whole quantum truth. For there is no general principle providing an absolute bar to extracting information from the environment.[...] Accordingly, such descriptions are referred to as FAPP [For All Practical Purposes]"

- ^ Huw Price, Times' Arrow and Archimedes' Point p 226. 'There is a world of difference between saying "the environment explains why collapse happens where it does" and saying "the environment explains why collapse seems to happen even though it doesn't really happen'.

[edit] Further reading

- Schlosshauer, Maximilian (2007). Decoherence and the Quantum-to-Classical Transition (1st edition ed.). Berlin/Heidelberg: Springer.

- Joos, E.; et al. (2003). Decoherence and the Appearance of a Classical World in Quantum Theory (2nd edition ed.). Berlin: Springer.

- Omnes, R. (1999). Understanding Quantum Mechanics. Princeton: Princeton University Press.

- Zurek, Wojciech H. (2003). "Decoherence and the transition from quantum to classical — REVISITED", arΧiv:quant-ph/0306072 (An updated version of PHYSICS TODAY, 44:36–44 (1991) article)

- Schlosshauer, Maximilian (23 February 2005). ""Decoherence, the Measurement Problem, and Interpretations of Quantum Mechanics"". Reviews of Modern Physics 76(2004): 1267–1305. doi:. arΧiv:quant-ph/0312059.

- J.J. Halliwell, J. Perez-Mercader, Wojciech H. Zurek, eds, The Physical Origins of Time Asymmetry, Part 3: Decoherence, ISBN 0-521-56837-4

- Berthold-Georg Englert, Marlan O. Scully & Herbert Walther, Quantum Optical Tests of Complementarity , Nature, Vol 351, pp 111–116 (9 May 1991) and (same authors) The Duality in Matter and Light Scientific American, pg 56–61, (December 1994). Demonstrates that complementarity is enforced, and quantum interference effects destroyed, by irreversible object-apparatus correlations, and not, as was previously popularly believed, by Heisenberg's uncertainty principle itself.

- Mario Castagnino, Sebastian Fortin, Roberto Laura and Olimpia Lombardi, A general theoretical framework for decoherence in open and closed systems, Classical and Quantum Gravity, 25, pp.154002-154013, (2008). A general theoretical framework for decoherence is proposed, which encompasses formalisms originally devised to deal just with open or closed systems.

[edit] External links

- A very lucid description of decoherence from www.ipod.org.uk/reality

- http://www.decoherence.info

- http://plato.stanford.edu/entries/qm-decoherence/

- Decoherence, the measurement problem, and interpretations of quantum mechanics from arXiv

- Measurements and Decoherence from arXiv

- A Detailed introduction from a graduate student's website at Drexel University

- Quantum Bug : Qubits might spontaneously decay in seconds Scientific American Magazine (October 2005)

![\rho_{SB}(t) = \hat{U}(t)[\rho_{S}(0)\otimes\rho_{B}(0)]\hat{U^{\dagger}}(t).](http://upload.wikimedia.org/math/d/e/4/de427b6c038aa9fa6f117931daf71425.png)

![\rho_{S}(t) = Tr_B[\hat{U}(t)[\rho_{S}(0)\otimes\rho_{B}(0)]\hat{U^{\dagger}}(t)].](http://upload.wikimedia.org/math/1/a/f/1af53ef694a95fbb41f589bb735088fc.png)

![\rho_{S}^{\prime}(t) = \frac{-i}{\hbar}\big[\mathbf{\tilde{H}_{S}},\rho_{S}(t)\big] + L_{D}\big[\rho_{S}(t)\big]](http://upload.wikimedia.org/math/6/0/9/6095db470b15dc5077abf5472db52688.png)

![L_{D}\big[\rho_{S}(t)\big] = \frac{1}{2}\sum_{\alpha,\beta = 1}^{M}b_{\alpha\beta}\big(\big[\mathbf{F}_{\alpha}, \rho_{S}(t)\mathbf{F}^{\dagger}_{\beta}\big] + \big[\mathbf{F}_{\alpha}\rho_{S}(t), \mathbf{F}^{\dagger}_{\beta}\big]\big).](http://upload.wikimedia.org/math/2/e/c/2ece8b2e331b43fb80a8869056e278d1.png)