Latent Dirichlet allocation

From Wikipedia, the free encyclopedia

In statistics, latent Dirichlet allocation (LDA) is a generative model that allows sets of observations to be explained by unobserved groups which explain why some parts of the data are similar. For example, if observations are words collected into documents, it posits that each document is a mixture of a small number of topics and that each word's creation is attributable to one of the document's topics. LDA was first presented as a graphical model for topic discovery and was developed by David Blei, Andrew Ng, and Michael Jordan in 2003.[1]

Contents |

[edit] Topics in LDA

In LDA, each document may be viewed as a mixture of various topics. This is similar to probabilistic latent semantic analysis (pLSA), except that in LDA the topic distribution is assumed to have a Dirichlet prior. In practice, this results in more reasonable mixtures of topics in a document. It has been noted, however, that the pLSA model is equivalent to the LDA model under a uniform Dirichlet prior distribution.[2]

For example, an LDA model might have topics CAT and DOG. The CAT topic has probabilities of generating various words: the words milk, meow, kitten and of course cat will have high probability given this topic. The DOG topic likewise has probabilities of generating each word: puppy, bark and bone might have high probability. Words without special relevance, like the (see function word), will have roughly even probability between classes (or can be placed into a separate category).

A document is generated by picking a distribution over topics (ie, mostly about DOG, mostly about CAT, or a bit of both), and given this distribution, picking the topic of each specific word. Then words are generated given their topics. (Notice that words are considered to be independent given the topics. This is a standard bag of words model assumption, and makes the individual words exchangeable.)

[edit] Model

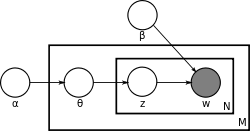

With plate notation, the dependencies among the many variables can be captured concisely. α is the parameter of the uniform Dirichlet prior on the per-document topic distributions. β is the parameter of the uniform Dirichlet prior on the per-topic word distribution. θi is the topic distribution for document i, zij is the topic for the jth word in document i, and wij is the specific word. The wij are the only observable variables, and the other variables are latent variables.

[edit] Inference

Learning the various distributions (the set of topics, their associated word probabilities, the topic of each word, and the particular topic mixture of each document) is a problem of Bayesian inference. The original paper used a variational Bayes approximation of the posterior distribution[1]; alternative inference techniques use Gibbs sampling[3] and expectation propagation[4].

[edit] Applications, extensions and similar techniques

Topic modeling is a classic problem in information retrieval. Related models and techniques are, among others, latent semantic indexing, probabilistic latent semantic indexing, non-negative matrix factorization, Gamma-Poisson.

The LDA model is highly modular and can therefore be easily extended, the main field of interest being the modeling of relations between topics. This is achieved e.g. by using another distribution on the simplex instead of the Dirichlet. The Correlated Topic Model[5] follows this approach, inducing a correlation structure between topics by using the logistic normal distribution instead of the Dirichlet. Another extension is the hierarchical LDA (hLDA)[6], where topics are joined together in a hierarchy by using the Nested Chinese Restaurant Process.

As noted earlier, PLSA is similar to LDA. The LDA model is essentially the Bayesian version of PLSA model. Bayesian formulation tends to perform better on small datasets because Bayesian methods can avoid overfitting the data. In a very large dataset, the results are probably the same. One difference is that PLSA uses a variable d to represent a document in the training set. So in PLSA, when presented with a document the model hasn't seen before, we fix  --the probability of words under topics--to be that learned from the training set and use the same EM algorithm to infer

--the probability of words under topics--to be that learned from the training set and use the same EM algorithm to infer  --the topic distribution under d. Blei argues that this step is cheating because you are essentially refitting the model to the new data.

--the topic distribution under d. Blei argues that this step is cheating because you are essentially refitting the model to the new data.

[edit] See also

[edit] Notes

- ^ a b Blei, David M.; Ng, Andrew Y.; Jordan, Michael I (January 2003). "Latent Dirichlet allocation". Journal of Machine Learning Research 3: pp. 993–1022. doi:10.1162/jmlr.2003.3.4-5.993 (inactive 2009-03-30). http://jmlr.csail.mit.edu/papers/v3/blei03a.html.

- ^ Girolami, Mark; Kaban, A. (2003). "On an Equivalence between PLSI and LDA" in Proceedings of SIGIR 2003., New York: Association for Computing Machinery. ISBN 1581136463.

- ^ Griffiths, Thomas L.; Steyvers, Mark (April 6 2004). "Finding scientific topics". Proceedings of the National Academy of Sciences 101 (Suppl. 1): 5228–5235. doi:. PMID 14872004.

- ^ Minka, Thomas; Lafferty, John (2002). "Expectation-propagation for the generative aspect model" in Proceedings of the 18th Conference on Uncertainty in Artificial Intelligence., San Francisco, CA: Morgan Kaufmann. ISBN 1-55860-897-4.

- ^ Blei, David M.; Lafferty, John D. (2006). "Correlated topic models". Advances in Neural Information Processing Systems 18. http://www.cs.cmu.edu/~lafferty/pub/ctm.pdf.

- ^ Blei, David M.; Jordan, Michael I.; Griffiths, Thomas L.; Tenenbaum; Joshua B (2004). "Hierarchical Topic Models and the Nested Chinese Restaurant Process" in Advances in Neural Information Processing Systems 16: Proceedings of the 2003 Conference., MIT Press. ISBN 0262201526.

[edit] External links

- LDA implementation in MATLAB, by M. Steyvers and T. Griffiths.

- LDA implementation in C, by D. Blei.

- LDA implementations in C and matlab.

- LDA implementation in C++ using Gibbs Sampling.