Intelligent agent

From Wikipedia, the free encyclopedia

In artificial intelligence, an intelligent agent (IA) is an autonomous entity which observes and acts upon an environment (i.e. it is an agent) and directs its activity towards achieving goals (i.e. it is rational).[1] Intelligent agents may also learn or use knowledge to achieve their goals. They may be very simple or very complex: a reflex machine such as a thermostat is an intelligent agent, as is a human being, as is a community of human beings working together towards a goal.

Intelligent agents are often described schematically as an abstract functional system similar to a computer program. For this reason, intelligent agents are sometimes called abstract intelligent agents (AIA) to distinguish them from their real world implementations as computer systems, biological systems, or organizations. Some definitions of intelligent agents emphasize their autonomy, and so prefer the term autonomous intelligent agents. Still others (notably Russell & Norvig (2003)) considered goal-directed behavior as the essence of intelligent and so prefer a term borrowed from economics, "rational agent".

Intelligent agents in artificial intelligence are closely related to agents in economics, and versions of the intelligent agent paradigm are studied in cognitive science, ethics, the philosophy of practical reason, as well as in many interdisciplinary socio-cognitive modeling and computer social simulations.

Intelligent agents are also closely related to software agents (an autonomous software program that carries out tasks on behalf of users). In computer science, the term intelligent agent may be used to refer to a software agent that has some intelligence, regardless if it is not a rational agent by Russell and Norvig's definition. For example, autonomous programs used for operator assistance or data mining (sometimes referred to as bots) are also called "intelligent agents".

Contents |

[edit] A variety of definitions

| Please help improve this article or section by expanding it. Further information might be found on the talk page. (August 2008) |

| This article is in need of attention from an expert on the subject. Please help recruit one or improve this article yourself. See the talk page for details. Please consider using {{Expert-subject}} to associate this request with a WikiProject. (August 2008) |

Intelligent agents have been defined many different ways.[2] According to Nikola Kasabov[3] IA systems should exhibit the following characteristics:

- accommodate new problem solving rules incrementally

- adapt online and in real time

- be able to analyze itself in terms of behavior, error and success.

- learn and improve through interaction with the environment (embodiment)

- learn quickly from large amounts of data

- have memory-based exemplar storage and retrieval capacities

- have parameters to represent short and long term memory, age, forgetting, etc.

[edit] Structure of agents

| Please help improve this article or section by expanding it. Further information might be found on the talk page. (February 2009) |

A simple agent program can be defined mathematically as an agent function[4] which maps every possible percepts sequence to a possible action the agent can perform or to a coefficient, feedback element, function or constant that affects eventual actions:

The program agent, instead, maps every possible percept to an action.

[edit] Classes of intelligent agents

Russell & Norvig (2003) group agents into five classes based on their degree of perceived intelligence and capability:[5]

- simple reflex agents

- model-based reflex agents

- goal-based agents

- utility-based agents

- learning agents

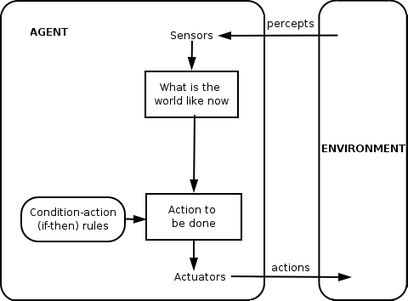

- Simple reflex agents

Simple reflex agents acts only on the basis of the current percept. The agent function is based on the condition-action rule: if condition then action rule

This agent function only succeeds when the environment is fully observable. Some reflex agents can also contain information on their current state which allows them to disregard conditions whose actuators are already triggered.

- Model-based reflex agents

Model-based agents can handle partially observable environments. Its current state is stored inside the agent maintaining some kind of structure which describes the part of the world which cannot be seen. This behavior requires information on how the world behaves and works. This additional information completes the “World View” model.

A model-based reflex agent keeps track of the current state of the world using an internal model.It then chooses an action in the same way as the reflex agent.

- Goal-based agents

Goal-based agents are model-based agents which store information regarding situations that are desirable. This allows the agent a way to choose among multiple possibilities, selecting the one which reaches a goal state.

- Utility-based agents

Goal-based agents only distinguish between goal states and non-goal states. It is possible to define a measure of how desirable a particular state is. This measure can be obtained through the use of a utility function which maps a state to a measure of the utility of the state.

- Learning agents

Learning has an advantage that it allows the agents to initially operate in unknown environments and to become more competent than its initial knowledge alone might allow.

[edit] Other classes of intelligent agents

According to other sources[who?], some of the sub-agents (not already mentioned in this treatment) that may be a part of an Intelligent Agent or a complete Intelligent Agent in themselves are:

- Decision Agents (that are geared to decision making);

- Input Agents (that process and make sense of sensor inputs - example neural network based agents neural network);

- Processing Agents (that solve a problem like speech recognition);

- Spatial Agents (that relate to the physical real-world);

- World Agents (that incorporate a combination of all the other classes of agents to allow autonomous behaviors).

- Believable agents - An agent exhibiting a personality via the use of an artificial character (the agent is embedded) for the interaction.

- Physical Agents - A physical agent is an entity which percepts through sensors and acts through actuators.

- Temporal Agents - A temporal agent may use time based stored information to offer instructions or data acts to a computer program or human being and takes program inputs percepts to adjust its next behaviors.

[edit] Agent environments

Environments in which agents operate can be defined in different ways. It is helpful to view the following definitions as referring to the way the environment appears from the point of view of the agent itself.[6]

[edit] Observable vs. partially observable

In order for an agent to be considered an agent, some part of the environment - relevant to the action being considered - must be observable. In some cases (particularly in software) all of the environment will be observable by the agent. This, while useful to the agent, will generally only be true for relatively simple environments.

[edit] Deterministic vs. stochastic

An environment that is fully deterministic is one in which the subsequent state of the environment is wholly dependent on the preceding state and the actions of the agent. If an element of interference or uncertainty occurs then the environment is stochastic. Note that a deterministic yet partially observable environment will appear to be stochastic to the agent.

An environment state wholly determined by the preceding state and the actions of multiple agents is called strategic.

[edit] Episodic vs. sequential

This refers to the task environment of the agent. If each task that the agent must perform does not rely upon past performance, and will not effect future performance then it is episodic. Otherwise it is sequential.

[edit] Static vs. dynamic

A static environment, as the name suggests, is one that does not change from one state to the next while the agent is considering its course of action. In other words, the only changes to the environment are those caused by the agent itself. A dynamic environment can change, and if an agent does not respond in a timely manner, this counts as a choice to do nothing.

[edit] Discrete vs. continuous

This distinction refers to whether or not the environment is composed of a finite or infinite number of possible states. A discrete environment will have a finite number of possible states, however, if this number is extremely high, then it becomes virtually continuous from the agents perspective.

[edit] Single-agent vs. multiple agent

An environment is only considered multiple agent if the agent under consideration must act cooperatively or competitively with another agent to realise some tasks or achieve goal. Otherwise another agent is simply viewed as a stochastically behaving part of the environment.

[edit] Overview of environments

| rising order of complexity → | |

| Observable | Partially observable |

| Deterministic | Stochastic |

| Episodic | Sequential |

| Static | Dynamic |

| Discrete | Continuous |

| Single-agent | Multiple agent |

[edit] Hierarchies of agents

To actively perform their functions, Intelligent Agents today are normally gathered in a hierarchical structure containing many “sub-agents”. Intelligent sub-agents process and perform lower level functions. Taken together, the intelligent agent and sub-agents create a complete system that can accomplish difficult tasks or goals with behaviors and responses that display a form of intelligence.[citation needed]

[edit] See also

- Agents

- Cognitive architectures

- Cognitive radio - a practical field for implementation

- Cybernetics, Computer science

- Data mining agent

- Embodied agent

- Federated search - the ability for agents to search heterogeneous data sources using a single vocabulary

- Fuzzy agents - IA implemented with adaptive fuzzy logic

- Intelligence

- Intelligent system

- Multi-agent system and multiple-agent system - multiple interactive agents

- Reinforcement learning

- Semantic Web - making data on the Web available for automated processing by agents

- Simulated reality

- Social simulation

[edit] Notes

- ^ Russell & Norvig 2003, chpt. 2

- ^ Some definitions are examined by Franklin & Graesser 1996 and Kasobov 1998.

- ^ Kasobov 1998

- ^ Russell & Norvig 2003, p. 33

- ^ Russell & Norvig 2003, pp. 46-54

- ^ Russell & Norvig 2003, pp. 38-44

[edit] References

- Russell, Stuart J.; Norvig, Peter (2003), Artificial Intelligence: A Modern Approach (2nd ed.), Upper Saddle River, NJ: Prentice Hall, ISBN 0-13-790395-2, http://aima.cs.berkeley.edu/, chpt. 2

- Stan Franklin and Art Graesser (1996); Is it an Agent, or just a Program?: A Taxonomy for Autonomous Agents; Proceedings of the Third International Workshop on Agent Theories, Architectures, and Languages, Springer-Verlag, 1996

- N. Kasabov, Introduction: Hybrid intelligent adaptive systems. International Journal of Intelligent Systems, Vol.6, (1998) 453-454.